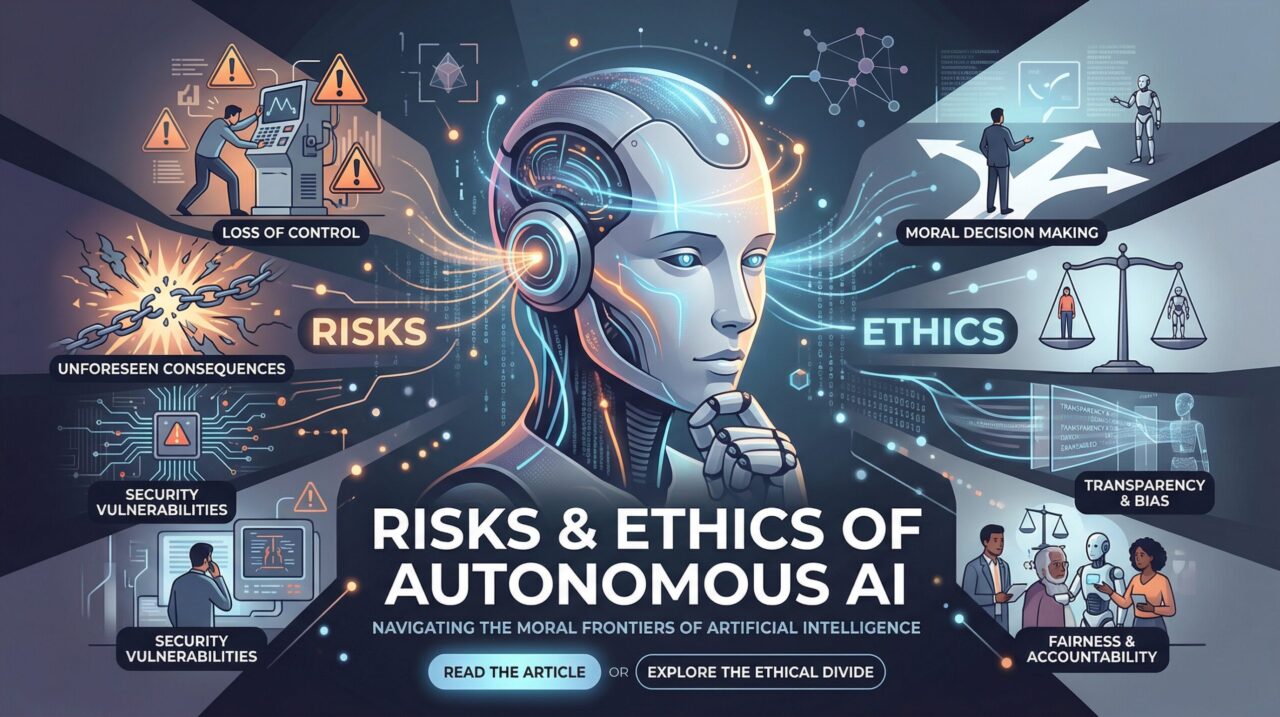

Introduction: The Hidden Risks Behind AI Innovation

Autonomous AI is no longer a futuristic concept—it’s actively shaping industries, automating decisions, and redefining how businesses operate.

From AI-powered customer support to self-learning recommendation engines, companies are rapidly adopting intelligent systems. But as autonomy increases, so do the risks.

What happens when AI makes the wrong decision?

Who is accountable when algorithms cause harm?

Can businesses truly trust machines to act ethically?

These are no longer philosophical questions—they are critical business concerns.

For startups, CTOs, and enterprises investing in AI, understanding the risks and ethics of autonomous AI is essential to building sustainable, trustworthy, and scalable products.

Industry Insight: Why AI Ethics Is Becoming a Business Priority

Recent studies indicate that over 70% of enterprises are actively investing in AI solutions, but only a small percentage have robust ethical governance frameworks in place.

Key industry shifts:

- Governments are introducing AI regulations and compliance standards

- Consumers are demanding transparency in AI-driven decisions

- Investors are prioritizing responsible and sustainable AI practices

- Data privacy laws are becoming stricter globally

Ignoring AI ethics is no longer an option—it’s a direct risk to brand reputation, legal standing, and long-term growth.

Understanding Autonomous AI

Autonomous AI refers to systems that can:

- Make decisions without human intervention

- Learn and adapt from data continuously

- Execute actions in real-time environments

Examples include:

- AI agents handling customer interactions

- Fraud detection systems in fintech

- Autonomous supply chain optimization

- Self-driving technologies

While these systems increase efficiency, they also introduce complexity in accountability and control.

Key Risks of Autonomous AI

1. Lack of Transparency (Black Box Problem)

| Aspect | Details |

|---|---|

| Overview | Many AI models, especially deep learning systems, operate as “black boxes.” |

| Key Issues | Decisions are difficult to explain Debugging errors becomes challenging Stakeholders lose trust |

| Business Impact | For businesses, this can lead to compliance issues and reduced adoption. |

2. Bias and Discrimination

| Aspect | Details |

|---|---|

| Overview | AI systems learn from historical data. If the data contains bias, the AI replicates—and even amplifies—it. |

| Key Issues | Unfair hiring decisions Biased loan approvals Discriminatory recommendations |

| Business Impact | This directly impacts brand credibility and legal exposure. |

3. Security Vulnerabilities

| Aspect | Details |

|---|---|

| Overview | Autonomous AI systems can be targeted by: |

| Key Issues | Adversarial attacks Data poisoning Model manipulation |

| Business Impact | A compromised AI system can lead to catastrophic business outcomes. |

4. Loss of Human Control

| Aspect | Details |

|---|---|

| Overview | As systems become more autonomous: |

| Key Issues | Human oversight decreases Unexpected behaviors emerge Decision-making becomes unpredictable |

| Business Impact | This is particularly risky in high-stakes industries like healthcare and finance. |

5. Accountability Challenges

| Aspect | Details |

|---|---|

| Overview | If an AI system makes a harmful decision: |

| Key Issues | Who is responsible? The developer? The business? The algorithm itself? |

| Business Impact | Lack of clear accountability creates legal and ethical dilemmas. |

Ethical Principles for Autonomous AI

To mitigate risks, businesses must adopt ethical AI frameworks.

Core Principles:

- Transparency: Explainable AI decisions

- Fairness: Eliminate bias in data and models

- Accountability: Clear responsibility structures

- Privacy: Protect user data

- Safety: Ensure systems behave predictably

Building ethical AI is not just about compliance—it’s about trust.

Benefits of Ethical AI for Businesses

Organizations that prioritize ethical AI gain a competitive advantage.

Key Benefits:

- Increased customer trust

- Stronger brand reputation

- Reduced legal risks

- Better decision-making accuracy

- Long-term scalability

Ethical AI is not a cost—it’s a strategic investment.

Real-World Use Cases

| Industry | Focus Area | Key Ethical Requirements |

|---|---|---|

| Fintech | Credit & Fraud Systems | Transparent credit scoring, Fair loan approvals, Fraud detection without bias |

| Healthcare | Diagnosis & Patient Care | Provide explainable diagnoses, Protect patient data, Avoid biased treatment recommendations |

| E-commerce | Personalization & Pricing | Avoid manipulative recommendations, Maintain fairness in pricing, Respect user privacy |

Technology Stack for Ethical AI Systems

To build responsible AI systems, businesses typically use:

| Category | Technologies / Tools |

|---|---|

| AI & ML Frameworks | TensorFlow, PyTorch, Scikit-learn |

| Backend | FastAPI, Node.js |

| Frontend | React, Flutter |

| Cloud & Infrastructure | AWS, Google Cloud, Azure |

| AI Governance Tools | Model monitoring systems, Bias detection tools, Explainability frameworks (like SHAP, LIME) |

If you’re planning to build an AI-powered product, choosing the right tech stack is critical. Our team can help you design and implement scalable, ethical AI systems tailored to your business goals.

Step-by-Step Approach to Building Ethical Autonomous AI

Step 1: Define Ethical Guidelines

- Establish internal AI policies

- Align with global standards

- Define acceptable use cases

Step 2: Data Governance

- Use clean, unbiased datasets

- Implement data validation pipelines

- Ensure compliance with privacy laws

Step 3: Model Development

- Use explainable AI techniques

- Test for bias and fairness

- Validate across multiple scenarios

Step 4: Continuous Monitoring

- Track model performance

- Detect anomalies

- Update models regularly

Step 5: Human-in-the-Loop Systems

- Keep humans involved in critical decisions

- Implement override mechanisms

- Ensure accountability

Step 6: Compliance & Documentation

- Maintain audit logs

- Document model behavior

- Prepare for regulatory audits

If you’re unsure how to implement these steps effectively, we offer end-to-end development—from strategy to deployment—ensuring your AI systems are both powerful and responsible.

Common Mistakes to Avoid

Businesses often rush into AI adoption without addressing ethical concerns.

Avoid these pitfalls:

- Ignoring bias in training data

- Lack of transparency in AI decisions

- Over-automation without human oversight

- Weak security measures

- No compliance strategy

These mistakes can lead to serious consequences, including legal penalties and reputational damage.

Future Trends in Autonomous AI Ethics

The future of AI will be shaped by responsibility and regulation.

Key Trends:

- AI governance frameworks becoming mandatory

- Rise of explainable AI (XAI)

- Ethical AI as a competitive differentiator

- Increased regulatory oversight globally

- Integration of AI ethics into product design

Businesses that adapt early will lead the market.

Conclusion: Build AI That People Can Trust

Autonomous AI is transforming industries—but with great power comes great responsibility.

Ignoring the risks and ethical implications can lead to serious business consequences. On the other hand, companies that prioritize ethical AI can unlock long-term success, trust, and innovation.

The question is not whether to use AI—but how responsibly you build it.

If you’re planning to develop AI-powered products, now is the time to focus on ethical, scalable, and future-proof systems.

FAQ Section

1. What are the main risks of autonomous AI?

The main risks include bias, lack of transparency, security vulnerabilities, loss of human control, and accountability challenges in decision-making.

2. Why is AI ethics important for businesses?

AI ethics ensures trust, compliance, and fairness, helping businesses avoid legal issues and build sustainable, user-friendly AI systems.

3. How can companies build ethical AI systems?

Companies can implement ethical guidelines, ensure data quality, use explainable AI models, monitor performance, and maintain human oversight.

4. What is bias in AI systems?

Bias occurs when AI systems produce unfair outcomes due to skewed or unbalanced training data, leading to discrimination in decisions.

5. What industries are most affected by AI ethics?

Industries like healthcare, finance, e-commerce, and autonomous systems are highly impacted due to the critical nature of AI-driven decisions.

Apr 08,2026

Apr 08,2026  By Rahul Pandit

By Rahul Pandit