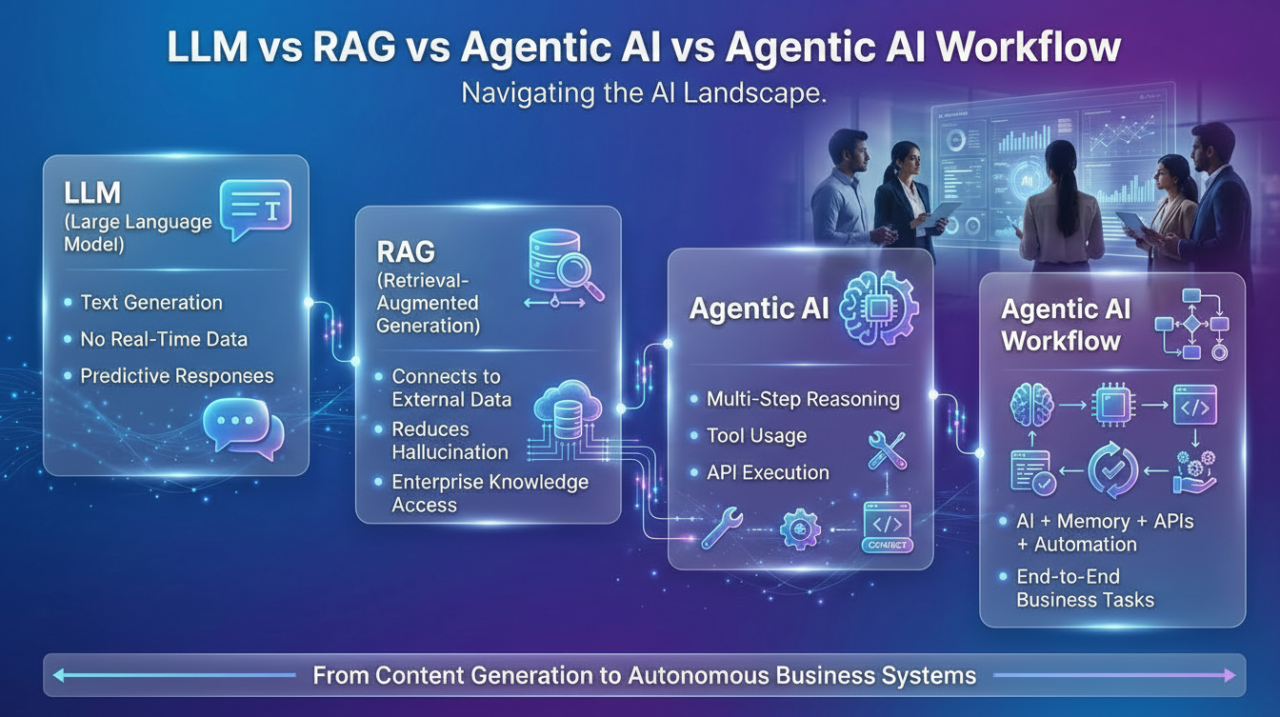

LLM vs RAG vs Agentic AI vs Agentic AI Workflow: What Businesses Must Know in 2026

AI is no longer experimental — it’s operational.

Startup founders, CTOs, and product leaders are constantly hearing terms like:

- LLM-powered apps

- RAG architecture

- Agentic AI systems

- Autonomous AI workflows

But here’s the problem:

Most businesses don’t know which AI approach fits their product vision, data structure, or scalability goals.

Choosing the wrong architecture can result in:

- High infrastructure cost

- Hallucinated outputs

- Security risks

- Poor scalability

- Limited automation capability

In this guide, we break down LLM vs RAG vs Agentic AI vs Agentic AI Workflow in practical business terms — so you can make informed technology decisions.

The AI Market Shift: From Chatbots to Autonomous Systems

The AI ecosystem is evolving rapidly. Businesses are moving from simple generative AI chatbots to intelligent systems capable of reasoning, retrieving knowledge, and executing tasks.

The evolution looks like this:

Text Generation → Knowledge-Grounded AI → Autonomous Decision-Making Systems

Understanding this progression is critical before investing in AI product development.

What is an LLM (Large Language Model)?

A Large Language Model (LLM) is a deep learning system trained on massive datasets to understand and generate human-like text.

What LLMs Do Well

- Content generation

- Summarization

- Translation

- Conversational interfaces

- Idea generation

Limitations of LLMs

- Hallucinations (incorrect but confident answers)

- No real-time data access

- No persistent memory

- Cannot execute structured tasks independently

Business Use Cases

- Marketing automation

- AI writing assistants

- Customer support chatbots

- Knowledge explanation tools

LLMs are powerful predictive engines — but they are not inherently operational systems.

What is RAG (Retrieval-Augmented Generation)?

RAG enhances LLMs by connecting them to external or private data sources.

Instead of relying only on training data, RAG systems:

- Retrieve relevant documents from a database

- Inject the context into the LLM prompt

- Generate grounded, contextual responses

Why RAG Matters for Enterprises

RAG is essential for organizations handling:

- Internal knowledge bases

- Legal documentation

- Healthcare records

- Financial data

- Standard operating procedures

Benefits of RAG Architecture

- Reduced hallucinations

- Higher answer accuracy

- Data security and control

- Enterprise-grade reliability

Example Technology Stack

- Backend: Python (FastAPI) or Node.js

- Vector Database: Pinecone, Weaviate, FAISS

- Embeddings Model

- LLM API integration

- Frontend: React (Web) or Flutter (Mobile)

- Cloud: AWS, Azure, or GCP

RAG transforms AI from a guessing engine into a knowledge-grounded assistant.

What is Agentic AI?

Agentic AI goes beyond answering questions. It performs actions.

An AI agent can:

- Plan multi-step tasks

- Use external tools

- Call APIs

- Access databases

- Make conditional decisions

- Maintain memory across sessions

In simple terms, Agentic AI behaves like a digital employee.

Business Example

In a SaaS CRM platform, an AI agent can:

- Analyze incoming leads

- Score them

- Send personalized emails

- Update CRM entries

- Schedule follow-ups automatically

This is operational automation — not just conversational AI.

If you’re planning to build something similar, our team can help design and implement a scalable AI architecture tailored to your product vision.

What is an Agentic AI Workflow?

An Agentic AI workflow is structured orchestration of multiple AI agents, data sources, APIs, and logic layers to achieve business objectives.

Think of it as:

AI + Automation + Memory + APIs + Conditional Logic

Example Workflow (Healthcare Platform)

- Patient submits symptom form

- AI agent analyzes symptoms

- Retrieves medical history via RAG

- Suggests preliminary insights

- Schedules appointment

- Updates dashboard and sends reminders

This is not a chatbot — it is a fully integrated intelligent system.

LLM vs RAG vs Agentic AI: Business Comparison

| Capability | LLM | RAG | Agentic AI |

|---|---|---|---|

| Text Generation | Yes | Yes | Yes |

| Real-Time Data Access | No | Yes | Yes |

| Task Execution | No | Limited | Yes |

| Multi-Step Planning | Limited | Moderate | Advanced |

| Enterprise Scalability | Moderate | Strong | Very Strong |

Benefits for Businesses

1. Cost Optimization

Automation reduces manual workload and operational cost.

2. Higher Accuracy

RAG ensures grounded responses using trusted data sources.

3. Scalable SaaS Infrastructure

Agentic workflows allow businesses to scale operations without proportional hiring.

4. Competitive Differentiation

Companies adopting structured AI workflows gain long-term technological advantage.

We offer end-to-end AI development — from idea validation and architecture planning to deployment and scaling.

Step-by-Step Development Approach

Step 1: Define Business Objectives

Clarify whether you need automation, knowledge retrieval, analytics, or a full AI workflow.

Step 2: Select the Right Architecture

Choose between LLM-only, RAG-based, or Agentic AI workflows.

Step 3: Design Data Flow & Integrations

Map APIs, databases, and automation layers.

Step 4: Build an MVP

Start lean. Validate with real users before scaling.

Step 5: Implement Security & Compliance

Use encryption, authentication layers, and data isolation.

Step 6: Optimize and Scale

Implement monitoring, logging, and cloud cost management.

If you’re evaluating AI integration for your product, you can Schedule a Free Consultation to explore feasibility and roadmap planning.

Common Mistakes to Avoid

- Using standalone LLMs for critical enterprise decisions

- Ignoring hallucination risks

- Over-engineering before validating MVP

- Skipping memory layer in agent-based systems

- Not planning cloud cost optimization

AI systems must be engineered strategically — not just integrated quickly.

Future Trends in AI Systems

- Multi-agent collaborative systems

- AI-native SaaS platforms

- Autonomous enterprise dashboards

- Vertical-specific AI frameworks

- Deeper integration with IoT and real-time data systems

Businesses that invest in structured AI workflows today will define tomorrow’s automation economy.

Conclusion

If your goal is:

- Content generation → LLM

- Knowledge-grounded answers → RAG

- Task automation → Agentic AI

- End-to-end intelligent operations → Agentic AI Workflow

The opportunity is not just in using AI — it’s in architecting AI systems strategically.

Talk to our experts and Get a Project Estimation tailored to your business goals.

FAQ Section

1. What is the difference between LLM and RAG?

An LLM generates responses based on pre-trained data, while RAG retrieves relevant real-time or internal documents before generating an answer, improving accuracy and reliability.

2. Is Agentic AI better than RAG?

They serve different purposes. RAG improves knowledge accuracy, while Agentic AI enables task execution and automation. Many enterprise systems combine both.

3. When should a startup use an Agentic AI workflow?

Startups should adopt Agentic workflows when they need multi-step automation such as CRM updates, booking systems, lead processing, or operational task orchestration.

4. Can RAG and Agentic AI be combined?

Yes. A common architecture uses RAG for accurate knowledge retrieval and Agentic AI for executing actions based on that knowledge.

5. How much does it cost to build an Agentic AI system?

Costs vary depending on complexity, integrations, infrastructure, and scalability requirements. Most businesses begin with MVP validation and phased architecture planning.

Feb 26,2026

Feb 26,2026  By Rahul Pandit

By Rahul Pandit