LLM vs Generative AI – A Simple Story to Finally Understand the Difference

If you are a founder, CTO, or product manager, you have probably heard both terms in the same meeting: “We need an LLM strategy” and “We should build a Generative AI product.” The problem is that many teams use these phrases as if they mean the same thing. They do not.

That confusion may sound harmless, but it affects product direction, vendor selection, technical architecture, budget planning, and customer expectations. A company that thinks every AI feature needs an LLM may overbuild. A company that thinks every generative AI product is just a chatbot may miss larger opportunities in design, automation, search, and content operations.

So let’s simplify it with a story.

A simple story

Imagine your company wants to launch a new digital studio for customers. This studio can write product descriptions, generate campaign images, create training videos, summarize meetings, build knowledge assistants, and help support teams reply faster.

Now picture that studio as a creative agency.

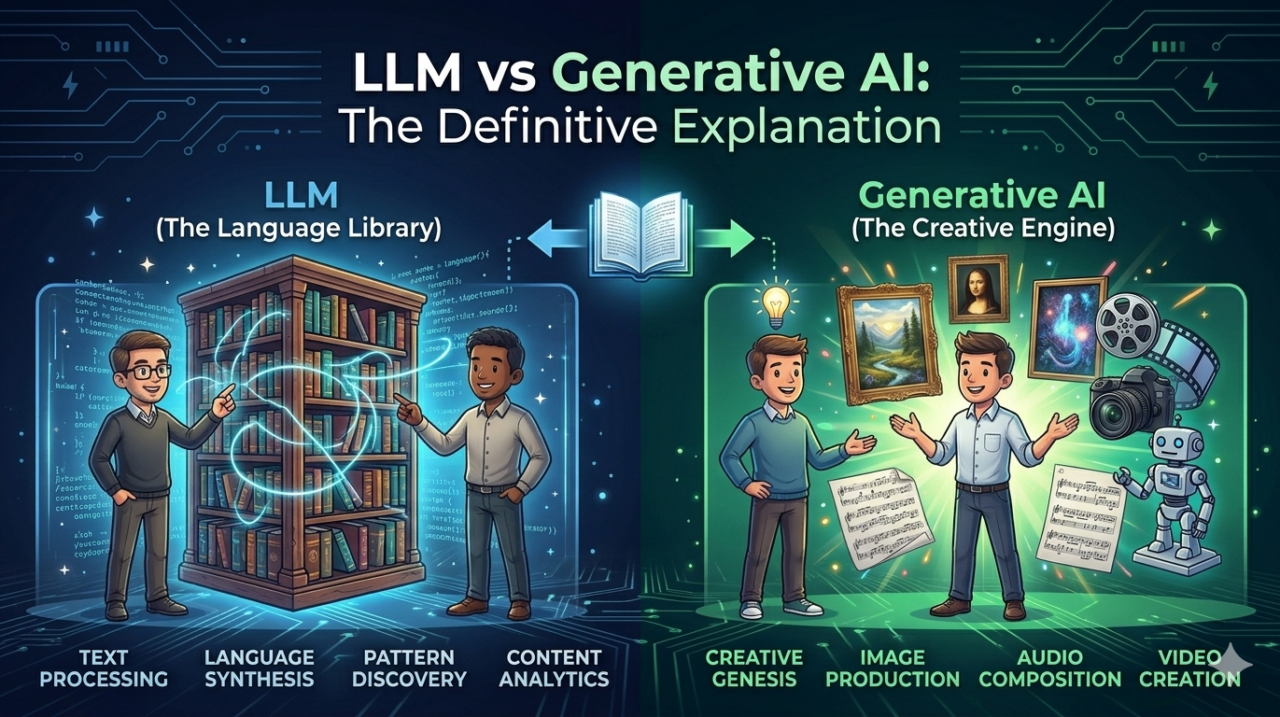

- The entire agency is Generative AI.

- The specialist writing team inside that agency is the LLM.

That is the easiest way to understand the difference.

Generative AI is the broader category of AI systems that create new content, including text, images, audio, video, and code. LLMs are a specialized subset of generative AI that focus on understanding and generating human language. In simple business terms, every LLM is part of generative AI, but generative AI includes much more than LLMs alone.

If your business needs a smart writing assistant, proposal generator, chatbot, or document summarizer, an LLM may be the core engine. If your business needs a broader system that can also create visuals, marketing assets, voice interactions, or multimodal experiences, you are thinking at the Generative AI level.

What Generative AI really means

Let’s stay with the agency example.

A creative agency does not only write copy. It also designs visuals, edits audio, creates video, and packages ideas for different channels. That is exactly how Generative AI works as a category. It refers to AI systems built to generate new content based on patterns learned from large datasets.

That content can take many forms:

- Text

- Images

- Audio

- Video

- Code

- Design variations

- Synthetic data

- 3D or multimodal outputs

This matters for business because the moment your product needs more than text, the conversation moves beyond just LLMs. For example, an e-commerce brand using AI to generate product images, ad copy, and customer support responses is using a broader generative AI stack, not only an LLM strategy. A SaaS company building document summarization, ticket drafting, and knowledge retrieval may rely heavily on LLMs, but it still sits inside a larger GenAI roadmap.

What an LLM really means

Now let’s zoom in on the writing team inside the agency.

Large Language Models are trained to process, predict, summarize, and generate language. They are especially effective at tasks like conversation, classification, extraction, rewriting, translation, summarization, and question answering. Modern LLMs are typically built on Transformer-based architectures designed to understand context across large amounts of text.

That makes them useful for business workflows such as:

- Internal knowledge assistants

- Customer support copilots

- Proposal and email drafting

- Contract summarization

- Report generation

- Search and retrieval interfaces

- Test case and documentation support

- Workflow automation through natural language

So when someone says, “We need an LLM,” the better question is: for what language problem?

Because an LLM is not the whole solution by itself. It is often one layer inside a broader product that includes business rules, workflow logic, data sources, APIs, human approval steps, analytics, and security controls. That distinction is where mature product teams separate themselves from hype-driven teams.

Why business leaders should care

Understanding this difference is not academic. It directly improves decision-making.

1. It helps you define the right product scope

If you need text generation, semantic search, summarization, or conversational interfaces, an LLM-first approach makes sense. If you need image generation, voice synthesis, multimodal search, or creative asset pipelines, you need a broader generative AI strategy.

2. It improves vendor and team selection

Some vendors are strong at prompt engineering, retrieval systems, and language workflows. Others specialize in image generation, synthetic media, or multimodal AI. When your internal team understands the difference, you choose the right partner faster.

3. It prevents expensive overengineering

A common mistake is using an LLM for problems that do not need one. Another is pitching a “GenAI platform” when the use case is really just document search plus summarization. Clear terminology creates cleaner architecture and better ROI.

4. It aligns product expectations

Business stakeholders often expect magic from AI. When you define whether the project is LLM-led or GenAI-led, it becomes easier to explain outputs, risks, latency, compliance needs, and quality controls.

If you’re planning to build something similar, our team can help map the business use case before you spend time on the wrong stack.

Real-world use cases

Here is a practical way to think about it.

LLM-led use cases

These are primarily language problems:

- AI chatbot for customer support

- Contract review assistant

- Internal knowledge base search

- Sales proposal generation

- Automated meeting notes

- Test case drafting and bug summary support

- Email classification and response suggestions

Broader Generative AI use cases

These go beyond language into multiple content types:

- Marketing campaign generation with copy and creative

- Product image generation for catalogs

- AI avatars for training and onboarding

- Voice-based assistants

- Video explainers generated from scripts

- Design variation generation for landing pages

- AI-powered 3D or visual asset generation

Hybrid use cases

Many modern products combine both.

For example, a real estate platform may use:

- An LLM to answer buyer questions,

- Image generation to create visual concepts,

- Voice AI for guided walkthroughs,

- And workflow automation to qualify leads.

That is why the smartest companies are not asking, “Should we use LLM or Generative AI?” They are asking, “Which mix of language, visual, and automation capabilities solves the customer problem best?”

Technology stack examples

For enterprise-grade delivery, the technology stack should match the use case instead of chasing trends.

A practical LLM stack

A typical LLM product may include:

- Frontend: React or Next.js for web dashboards, Flutter for mobile apps

- Backend: FastAPI or Node.js for orchestration and APIs

- Model layer: Hosted LLM APIs or private model deployments

- Retrieval layer: Vector database for semantic search and RAG workflows

- Data layer: PostgreSQL, object storage, and document pipelines

- Cloud: AWS for compute, storage, identity, logging, and scaling

- Security: Role-based access, audit trails, encryption, redaction, approval workflows

A broader Generative AI stack

A wider GenAI application may combine:

- LLMs for text tasks

- Image or video generation models for creative output

- Speech-to-text and text-to-speech services

- Workflow engines for human review

- Asset storage and CDN delivery

- Analytics for prompt, usage, and performance monitoring

This is where architecture matters. A polished AI feature is not just a model call. It is a production system.

We offer end-to-end development from idea to deployment, including product strategy, UX, model integration, backend APIs, cloud setup, and rollout planning.

A step-by-step development approach

Here is a clear roadmap for companies exploring this space.

Step 1: Start with the business problem

Do not start with “We need GenAI.” Start with “We need to reduce support time,” “We need faster content operations,” or “We need a smarter product experience.”

Step 2: Classify the use case

Ask:

- Is this mostly text?

- Does it require images, audio, or video?

- Does it need enterprise knowledge grounding?

- Does it require human approval?

This step tells you whether the project is mainly LLM-led or broader Generative AI.

Step 3: Design the workflow

Map inputs, outputs, approval steps, integrations, and risks. Decide where AI should assist, where automation should take over, and where humans must remain in control.

Step 4: Build a narrow MVP

Launch one use case first:

- A support copilot,

- A document summarizer,

- A product description generator,

- Or a multimodal campaign assistant.

This reduces cost and accelerates learning.

Step 5: Add governance early

Include prompt controls, audit logs, content filters, user roles, and monitoring from the beginning. AI without governance becomes technical debt very quickly.

Step 6: Measure value

Track business metrics, not only model metrics:

- Response quality

- Time saved

- Conversion lift

- Reduced operational load

- User adoption

- Human review rate

Step 7: Scale across functions

Once the first workflow proves value, extend into sales, support, operations, marketing, or product experience.

If your team is evaluating the right AI roadmap, a good next step is to Schedule a Free Consultation and translate the use case into a clear MVP plan.

Common mistakes to avoid

The fastest way to waste time in AI is to be vague.

Mistake 1: Treating LLM and Generative AI as interchangeable

This leads to poor scoping and confusing stakeholder conversations.

Mistake 2: Building a demo instead of a system

A chatbot demo is easy. A secure, observable, enterprise-grade AI workflow is not. Real value comes from integration, governance, and reliability.

Mistake 3: Ignoring data readiness

Even the best LLM cannot help much if your documents are disorganized, outdated, or inaccessible. Clean inputs matter.

Mistake 4: Forgetting the human layer

Many teams either overtrust AI or overcontrol it. The right design keeps humans involved where judgment, compliance, or brand accuracy matter most.

Mistake 5: Choosing tools before defining outcomes

Technology should follow the use case, not the other way around.

Future trends

The next wave will be less about standalone chatbots and more about AI-native software.

We will see more products where LLMs handle language, while broader generative AI systems handle multimodal creation, personalization, and workflow execution. Enterprise tools will increasingly combine text, voice, image, search, and automation into a single experience. SaaS platforms will move from “AI features” to AI becoming part of the operating layer of the product itself.

For business leaders, that creates a clear opportunity. The winners will not be the companies that use the most AI buzzwords. They will be the ones that identify the right use cases, ship practical value, and build reliable systems around it.

Book a free strategy meeting to discuss your requirements, architecture options, and rollout priorities. Or, if you are already shaping your roadmap, Talk to Our Experts for a realistic product-first view of what to build now and what to phase later.

Final thought

The simplest way to remember it is this: Generative AI is the whole creative agency, and the LLM is the language specialist inside it. Once your team understands that difference, product strategy becomes clearer, technical planning gets easier, and AI investments become far more effective.

FAQ Section

1. What is the difference between LLM and generative AI?

Generative AI is a broad category of AI that creates content, while LLMs are a subset focused specifically on generating text.

2. Are LLMs part of generative AI?

Yes, LLMs are a type of generative AI designed for language-based tasks.

3. When should I use LLM vs generative AI?

Use LLMs for text-based tasks and generative AI for multimedia content like images or videos.

4. Can businesses use both LLM and generative AI together?

Yes, combining both can create powerful AI-driven applications.

5. Is generative AI expensive to implement?

It depends on the use case. Using APIs is cost-effective, while custom models require higher investment.

Mar 30,2026

Mar 30,2026  By Rahul Pandit

By Rahul Pandit